Batch Prediction Requests¶

Pre-requisites¶

MinIO should already be installed with Seldon Enterprise Platform.

The MinIO browser should be exposed on /minio/ (note the trailing forward slash).

For trials, the credentials will by default be the same as the Enterprise Platform login, with MinIO using the email as its Access Key and the password as its Secret Key.

Note

Other cloud storage services, such as S3 and GCS, can be specified alternatively with the corresponding secret files configured.

On a production cluster, the namespace needs to have been set up with a service account. This can be found under the argo install documentation.

We will:

Deploy a pipeline with a pretrained SKlearn iris model

Run a batch job to get predictions

Check the output

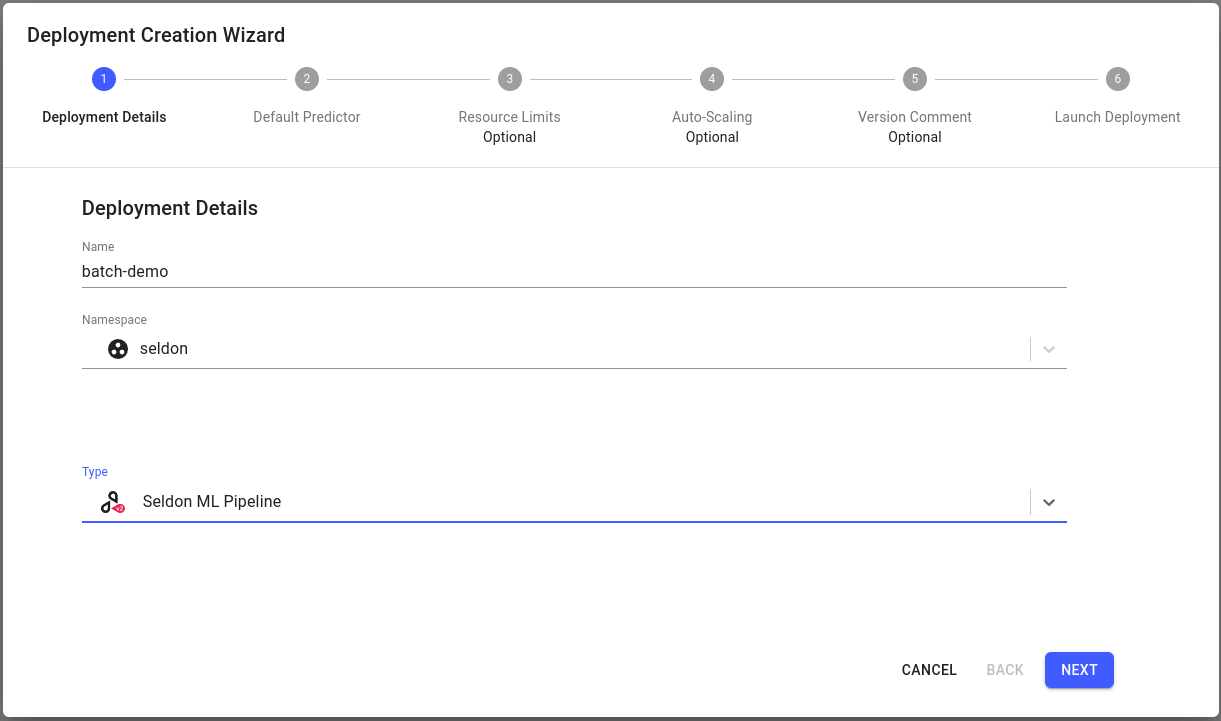

Create a Pipeline¶

Click on

Create new deploymentbutton.Enter the deployment details as follows:

Name: batch-demo

Namespace: seldon

Type: Seldon ML Pipeline

Configure the default predictor as follows:

Runtime: Scikit Learn

Model URI:

gs://seldon-models/v1.18.2/sklearn/irisModel Project: default

Storage Secret: (leave blank/none)

Additional Model Requirements: (leave blank/none)

Skip

Nextfor the remaining steps, then clickLaunch.If your deployment is launched successfully, it will have

Availablestatus, on the overview page.

Setup Input Data¶

Download the

input data file. Note that this input file is different from the input data from seldon-core-v1 batch prediction requests. The first few lines of the input file ‘input-data-v2.txt’ should show the following format:{"inputs":[{"name":"predict","data":[0.38606369295833043,0.006894049558299753,0.6104082981607108,0.3958954239450676],"datatype":"FP64","shape":[1,4]}]} {"inputs":[{"name":"predict","data":[0.7223678219956075,0.608521741883582,0.8596266157372878,0.20041864827775757],"datatype":"FP64","shape":[1,4]}]} {"inputs":[{"name":"predict","data":[0.8659159480026418,0.2383384971368594,0.7743518759043038,0.8748919374334038],"datatype":"FP64","shape":[1,4]}]}

Go to the MinIO browser and use the button in the bottom-right to create a bucket. Call it

data.Again from the bottom-right choose to upload the

input-data-v2.txtfile to thedatabucket.

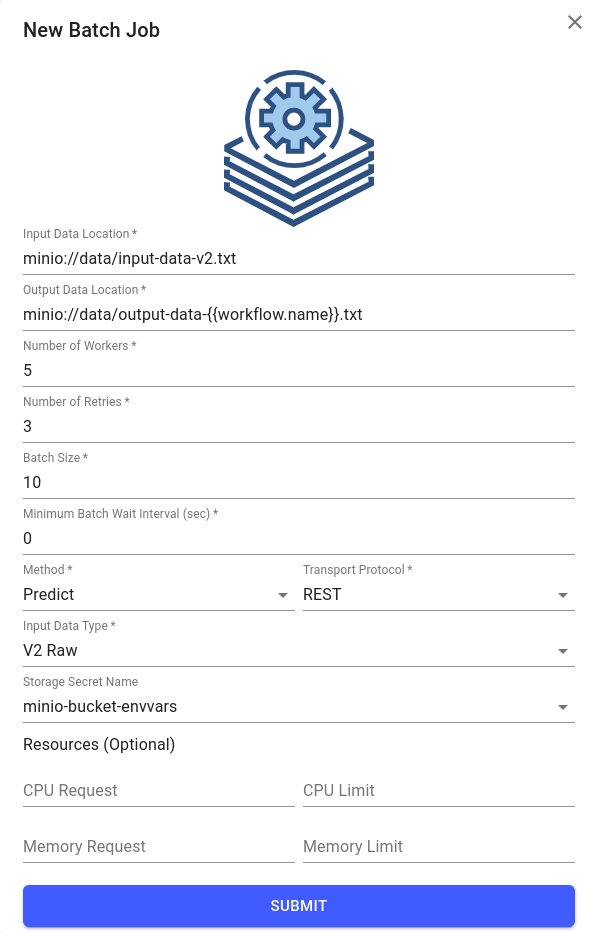

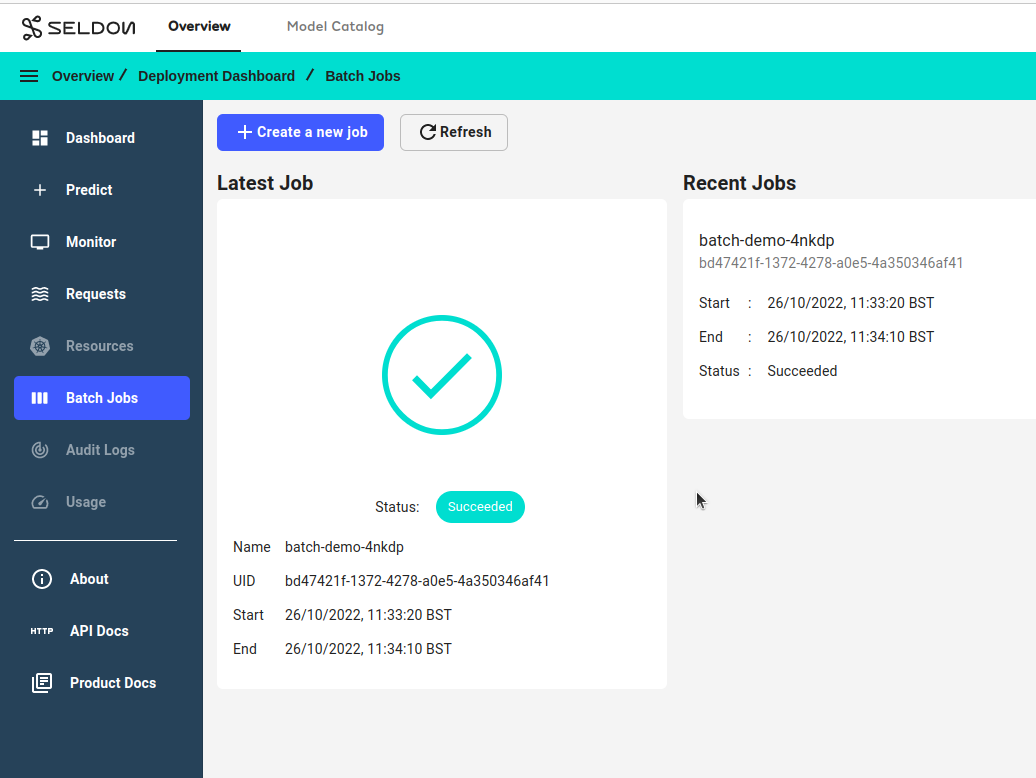

Run a Batch Job¶

Click on the tile for your new pipeline called

batch-demoin the Overview page of the Enterprise Platform UI.Go to the Batch Jobs section for this deployment by clicking on the

Batch Jobsbutton in the sidebar on the left.Click on the

Create your first jobbutton, enter the following details, and clickSubmit:Input Data Location:

minio://data/input-data-v2.txtOutput Data Location:

minio://data/output-data-v2-{{workflow.name}}.txtNumber of Workers: 5

Number of Retries: 3

Batch Size: 10

Minimum Batch Wait Interval (sec): 0

Method: Predict

Transport Protocol: REST

Input Data Type: V2 Raw

Object Store Secret Name: minio-bucket-envvars

Note

Here

minio-bucket-envvarsis a pre-created secret in the same namespace as the model, containing environment variables.Note

In the

Resources (Optional)section, you can specify how much memory and CPU are allocated to the containers in this specific batch job workflow. If no values are set on this form, the default values specified in Helm values will be used. Refer to the Kubernetes documentation on requests and limits for details.Give the job a couple of minutes to complete, then refresh the page to see the status.

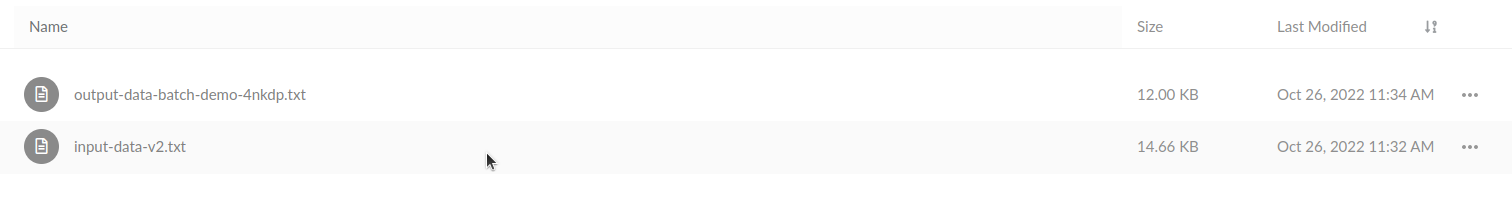

Inspect the output file in MinIO:

If you open that file you should see contents such as:

{"model_name":"","outputs":[{"data":[0],"name":"predict","shape":[1],"datatype":"INT64"}],"parameters":{"batch_index":0}} {"model_name":"","outputs":[{"data":[0],"name":"predict","shape":[1],"datatype":"INT64"}],"parameters":{"batch_index":2}} {"model_name":"","outputs":[{"data":[1],"name":"predict","shape":[1],"datatype":"INT64"}],"parameters":{"batch_index":4}} {"model_name":"","outputs":[{"data":[0],"name":"predict","shape":[1],"datatype":"INT64"}],"parameters":{"batch_index":1}}

If not, see the argo section for troubleshooting.

Micro batching¶

You can specify a batch-size parameter which will group multiple predictions into a single request.

This allows you to take advantage of the higher performance batching provides for some models, and reduce networking overhead.

The response will be split back into multiple, single-prediction responses

so that the output file looks identical to running the processor with a batch size of 1.